vector search evaluation indicator design

Standard for managing search quality by interpreting Recall@K, MRR, and NDCG according to the service context

Introduction

Vector search quality is not determined solely by embedding model selection. In practice, index parameters, reranking, and evaluation set quality largely determine the results, but this part is often omitted. This article covers how to continuously improve search quality by linking offline/online metrics.

Problem definition

Even though search accuracy is low, there are many cases where the cause cannot be identified.

- The evaluation set does not reflect actual user queries, so the score and perceived quality are different.

- Noise documents increase because only recall is tracked and precision is not looked at.

- Since the index parameter change experiment was not recorded, regression is repeated.

Evaluation design requires multi-level indicators rather than single indicators. Recall@k, nDCG, response delay, and cost must be viewed together.

Key concepts

| perspective | Design criteria | Verification points |

|---|---|---|

| offline accuracy | Recall@k + nDCG | Trend based on test set |

| Online Quality | Post-search click/completion rate | Real user experience |

| Performance | query latency + QPS | SLO compliance rate |

| cost | Token/Embedding Cost | Cost per request |

Indicators may conflict with each other. Increasing quality can increase costs, and increasing speed can decrease accuracy, so goal priorities must be clear.

Code example 1: Recall@k calculation

export function recallAtK(retrieved: string[], relevant: Set<string>, k: number) {

const topK = retrieved.slice(0, k);

const hit = topK.filter((id) => relevant.has(id)).length;

return hit / Math.max(relevant.size, 1);

}

export function ndcgAtK(gains: number[], k: number) {

const top = gains.slice(0, k);

const dcg = top.reduce((acc, gain, i) => acc + gain / Math.log2(i + 2), 0);

const ideal = [...gains].sort((a, b) => b - a).slice(0, k);

const idcg = ideal.reduce((acc, gain, i) => acc + gain / Math.log2(i + 2), 0);

return idcg === 0 ? 0 : dcg / idcg;

}

Code Example 2: Experimental Registry Record

INSERT INTO vector_search_experiments (

experiment_id,

embedding_model,

index_type,

top_k,

recall_at_10,

ndcg_at_10,

latency_p95_ms,

cost_per_1k_queries

) VALUES (

'exp_20260303_hnsw_m64',

'text-embedding-3-large',

'hnsw',

20,

0.84,

0.79,

145,

0.63

);

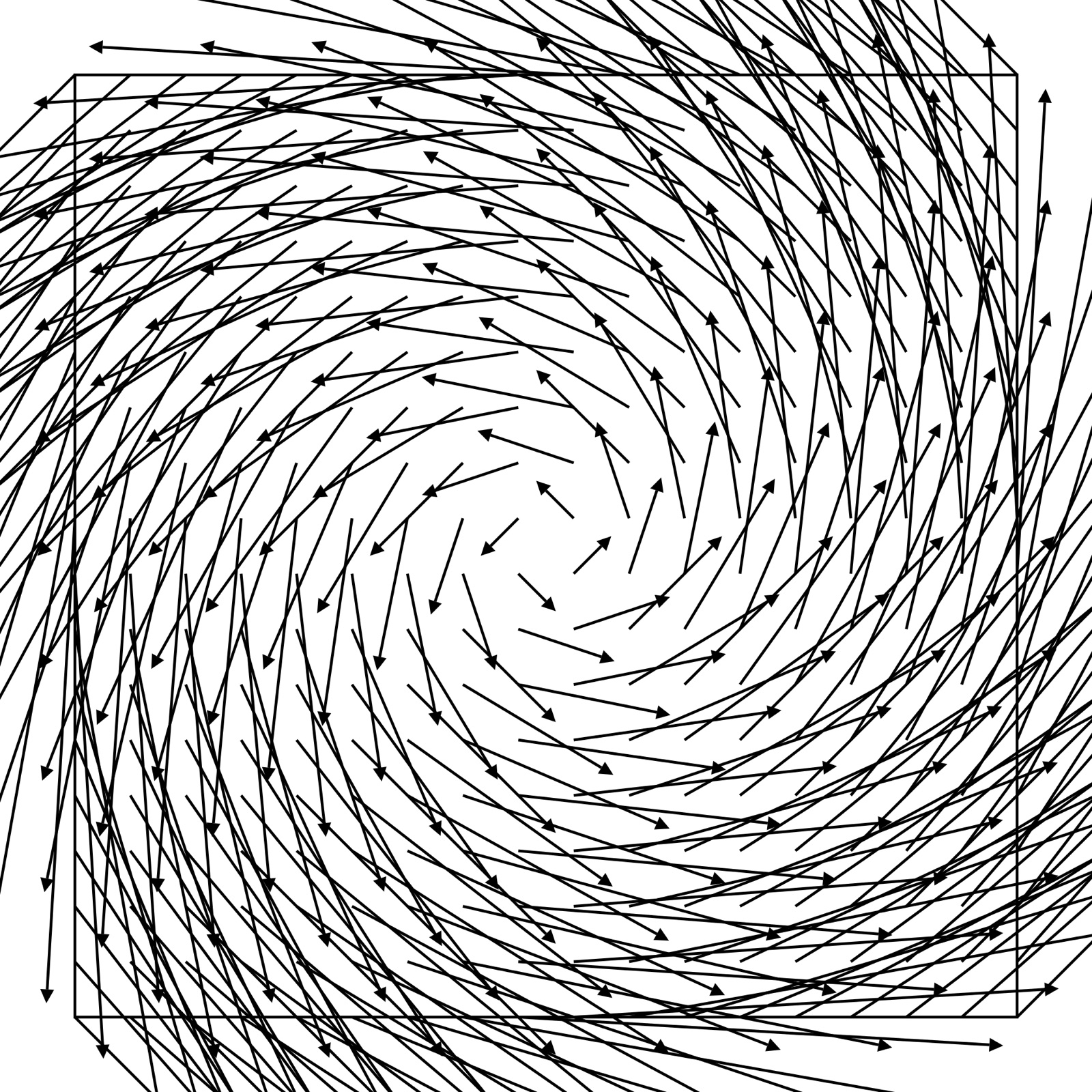

Architecture flow

Tradeoffs

- Increasing top-k increases recall, but delays and costs also increase.

- Adding reranking improves quality, but increases pipeline complexity.

- Although it costs money to maintain the evaluation set, quality regression can be detected early.

Cleanup

Vector search evaluation is a system optimization problem, not a model comparison. By tracking offline accuracy and online indicators together and managing experiment history, quality can be steadily improved.

Image source

- Cover: source link

- License: Public domain / Author: Fibonacci.

- Note: After downloading the free license image from Wikimedia Commons, it was optimized to JPG at 1600px.