RAG Chunking Strategy Field Guide

How to improve RAG quality by measuring the correlation between document segmentation rules and search accuracy

Introduction

When RAG quality is low, many teams change the model or embedding first. However, the actual cause is often the chunking rules. Even with the same dataset, search accuracy varies greatly depending on chunk size, overlap, and metadata policy.

This article explains how to treat “chunking as an experimentable variable.” The key is to choose a chunking strategy based on indicators, not sense.

Problem definition

Frequent problems in the early days of RAG introduction:

- Dividing the document into large chunks increases recall, but the basis for correct answers is blurred.

- If you break it down too small, you can retrieval, but the quality of the answer decreases due to lack of context.

- The chunk policy is fixed to the same value for each document type (guide/FAQ/code document).

- The offline evaluation is good, but the online experience quality is low.

The key is to design “document structure-based chunking” and “question type-based evaluation set” together.

Key concepts

| variable | Description | Recommended starting value |

|---|---|---|

| chunk_size | Token/character length of one chunk | 350~550 tokens |

| overlap | Overlapping adjacent chunks | 10~20% |

| splitter | Split based on paragraph/header/code block | Structure first division |

| metadata | section, source, updated_at | Required attachment |

In operation, a “policy by document type” is more effective than a single optimal value.

Code Example 1: Structure-Based Chunker

type Chunk = {

id: string;

text: string;

source: string;

section: string;

};

export function chunkBySection(input: {

source: string;

markdown: string;

size: number;

overlap: number;

}): Chunk[] {

const sections = input.markdown.split(/^##\s+/m).filter(Boolean);

const chunks: Chunk[] = [];

for (const raw of sections) {

const [sectionTitle, ...rest] = raw.split("\n");

const body = rest.join("\n").trim();

let cursor = 0;

while (cursor < body.length) {

const end = Math.min(body.length, cursor + input.size);

const text = body.slice(cursor, end);

chunks.push({

id: `${input.source}-${chunks.length + 1}`,

text,

source: input.source,

section: sectionTitle.trim(),

});

cursor = Math.max(end - input.overlap, cursor + 1);

}

}

return chunks;

}

Code Example 2: Offline Evaluation (Recall@K)

type Sample = { question: string; expectedSource: string };

export async function evaluateRecallAtK(samples: Sample[], k = 5) {

let hit = 0;

for (const sample of samples) {

const retrieved = await searchChunks(sample.question, { topK: k });

const ok = retrieved.some((item: { source: string }) => item.source === sample.expectedSource);

if (ok) hit += 1;

}

return {

total: samples.length,

recallAtK: samples.length === 0 ? 0 : hit / samples.length,

};

}

Architecture flow

Tradeoffs

- If the chunk is large, context preservation is good, but noise increases.

- If the chunk is small, accurate matching is good, but the answer basis is fragmented.

- Increasing overlap increases recall, but storage costs and the possibility of duplicate responses also increase.

Cleanup

In RAG quality tuning, chunking is the cheapest and most effective lever. By adding segmentation that reflects the document structure and a Recall@K-based evaluation loop, quality can be stably improved without model replacement.

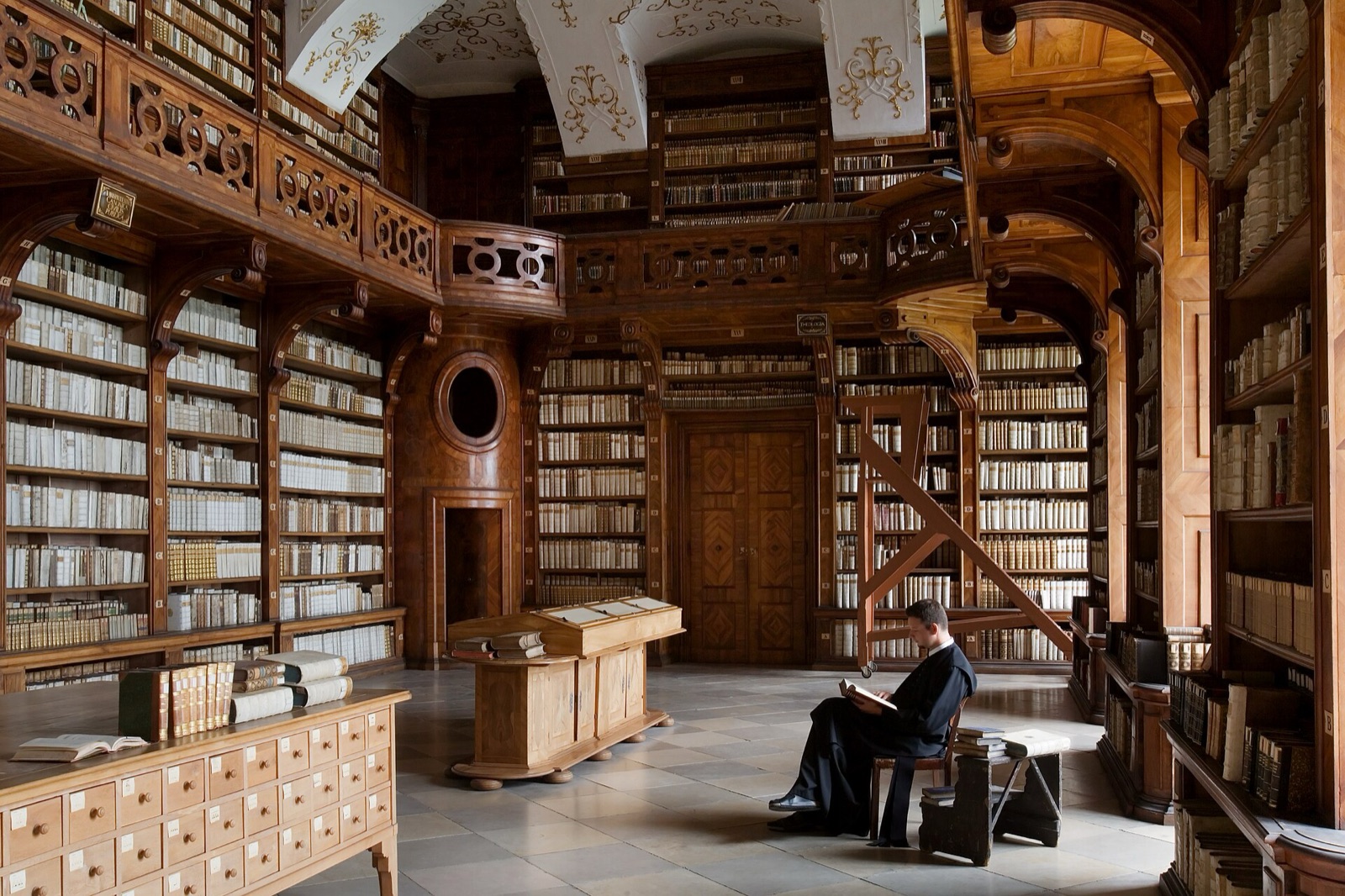

Image source

- Cover: source link

- License: CC BY-SA 3.0 / Author: Jorge Royan

- Note: After downloading the free license image from Wikimedia Commons, it was optimized to JPG at 1600px.