Prompt versioning and A/B testing

A practical method to quantitatively track quality changes by operating prompt changes on an experimental basis

Introduction

If you don't manage prompts like code, model quality will depend on chance. In practice, version tracking and experimental design are essential because even small sentence modifications have a large impact on response style and accuracy. This article presents a prompt versioning management and A/B testing operating model.

Problem definition

Prompt operation failure begins with unreproducibility.

- There is no record of which version is used during deployment, so the cause of the regression cannot be traced.

- Temporary improvement leads to long-term deterioration by modifying based on intuition without an evaluation set.

- The same experiment is repeated because the experiment results are not documented.

Prompts are product assets, not settings. You need a pipeline with version, experimentation, and deployment gates.

Key concepts

| perspective | Design criteria | Verification points |

|---|---|---|

| Version Control | prompt id + semver | Deployment version traceability |

| evaluation | Fixed Benchmark Set | Accuracy/Safety Score |

| experiment | Traffic Splitting A/B | statistical significance |

| Distribution | promotion gate | Regression detection speed |

Improving prompts may seem like a creative task, but it's actually more like experimental engineering. Stable improvement is possible only by measuring the output quality compared to the same input.

Code Example 1: Prompt Registry

export type PromptVersion = {

id: string;

version: string;

template: string;

owner: string;

createdAt: string;

};

export const supportReplyPromptV3: PromptVersion = {

id: "support-reply",

version: "3.1.0",

template: "You are a support engineer. Answer with diagnosis, steps, and risk.",

owner: "ai-platform",

createdAt: "2026-03-03",

};

Code example 2: Aggregating A/B experiment results

SELECT

prompt_version,

COUNT(*) AS samples,

AVG(user_score) AS avg_score,

AVG(CASE WHEN escalated = true THEN 1 ELSE 0 END) AS escalation_rate

FROM llm_response_logs

WHERE experiment_id = 'exp_prompt_20260303'

GROUP BY prompt_version

ORDER BY avg_score DESC;

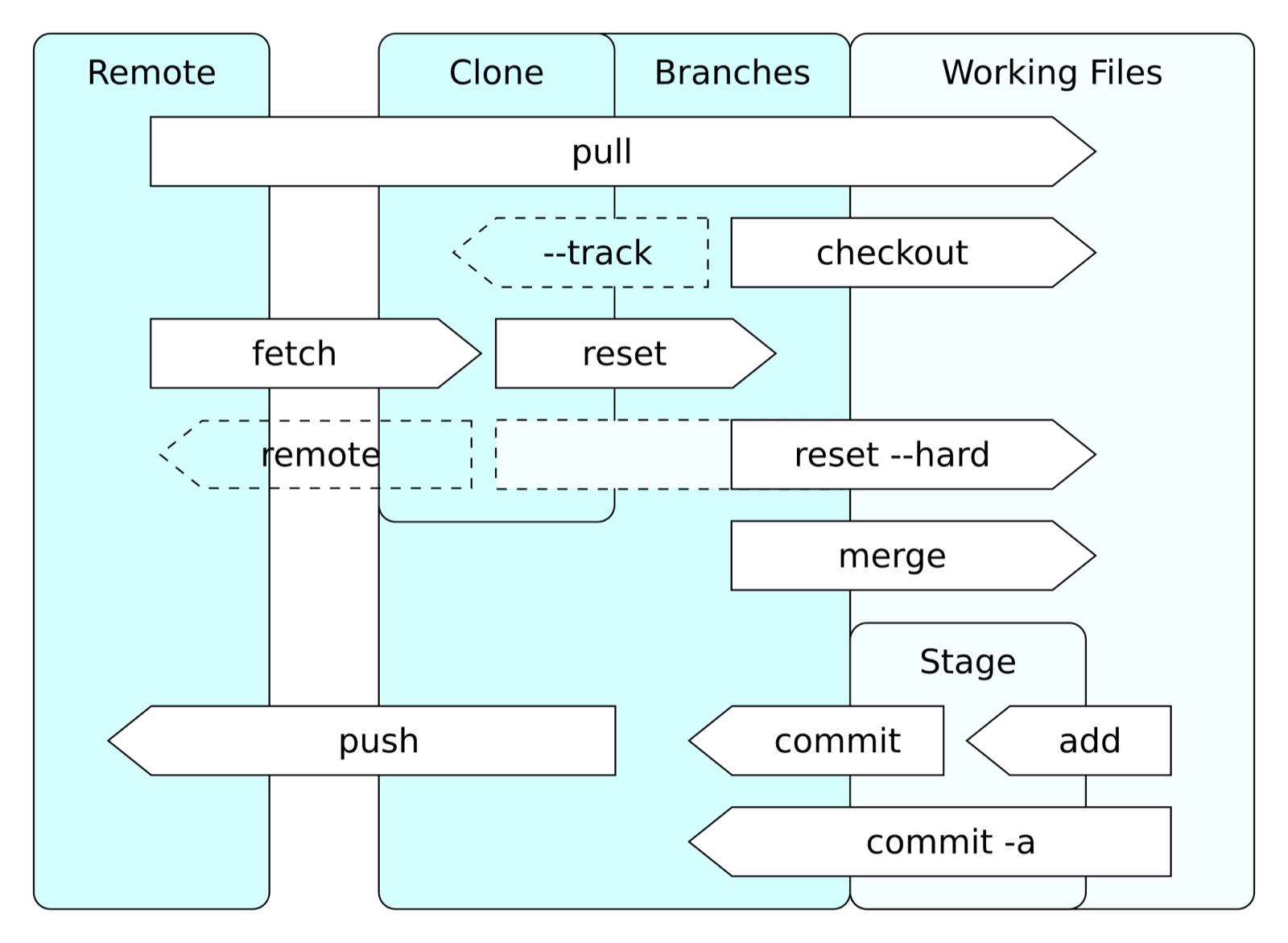

Architecture flow

Tradeoffs

- Adding an experiment procedure slows down the process but reduces the risk of regression.

- Maintaining the benchmark set is costly, but it is essential for long-term quality improvement.

- A/B testing is accurate, but interpretation errors may occur if the number of samples is small.

Cleanup

By managing prompt operations at the code level, quality can be controlled regardless of model changes. Version tracking, quantitative evaluation, and promotion gates must be combined to build a stable improvement loop.

Image source

- Cover: source link

- License: CC BY 3.0 / Author: Daniel Kinzler

- Note: After downloading the free license image from Wikimedia Commons, it was optimized to JPG at 1600px.